Most of the times when we think of testing our AI or Machine Learning model it’s about accuracy or maybe even precision and recall. We presume that these measures tell us the correctness of our product. But the correctness of the model is not the same as a satisfied user. Let me tell you a short story of how we created a great model for tagging knowledge which resulted in poor results after the first user tests. And by the way, this story has a happy end.

Smart product with machine learning

We developed a smart product full of artificial intelligence and machine learning for ABN AMRO, a large bank in The Netherlands. As a result, bank employees can create Standard Operational Procedures (SOPs) and share them with co-workers. Co-workers can search for the right SOPs, but the system recommends the right SOPs at the right moment as well.

Better, more tags and faster

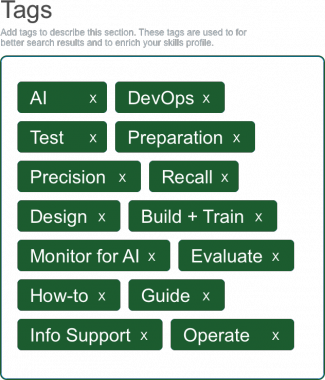

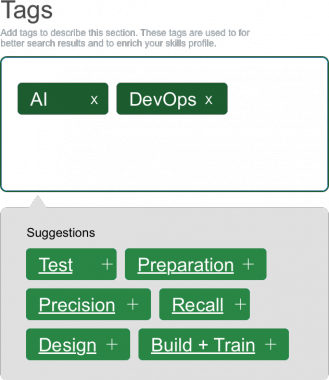

One of the key elements for our model is the use of tags. A tag is a keyword or term assigned to a piece of information. This kind of metadata helps describe an item. The search, recommendation and profiling algorithms are leaning heavily on these tags. Long story short, they’re important for the system, so it’s important the users add the right tags to the information.

To improve the processing of tagging, we created a tag recommendation model. With the help machine learning algorithms and with Natural Language Processing we extract all possible tags out of the description and the procedure. The recommendation model classifies the tags as ‘relevant’ or ‘not relevant’. This has to result in better tags, more tags and faster tagging.

User testing our Machine Learning model

We were as confident as we could be. This was a neat model. All the measures were looking good. Precision high, pretty good recall. The user interface was slick and during typing the system recommended tag after tag after tag. Let the user testing begin. Nothing can go wrong, right?

Wrong! Our usability testing expert came back with the first results and they were disappointing. Yes, we collected more tags. Yes, the tags were more relevant, so better. Yes, the users liked the idea of tag recommendations. But no, it wasn’t faster. Even worse it took way more time for each user in the test to complete the tagging.

Problem and solution

The problem was that we showed all relevant tags and sometimes this could add up to over 10 or 15 tags. The users read all the tags and had to think for each tag if it was the right tag. So it slowed them down.

After some test rounds we found the perfect solution for this problem. Therefor we split the selected tags and the suggested tags and showed less of the tags. It took some testing to find the sweet spot for the number of tags to show. It wasn’t a game of just picking a number. The sweet spot is different for each piece of information. After round 4 we came up with the following solution and now we have better tags, more tags and the tagging is faster and easier than ever.

Conclusion

So although the recommendation model performed well, it didn’t satisfy the user. We had to adjust the model to create the best solution for the user. We often think we know what our model does, but we never know how users react to it, unless we test it.

Want to know more about building and testing great machine learning models? Check out www.infosupport.com/ai.

0 comments

0 comments Artificial Intelligence

Artificial Intelligence